How I Learned to Stop Worrying and Love Macros

“A Modest Proposal For Preventing The Children of Rust Developers From Being Exposed To Procedural Macros”

Rust macros are powerful, that’s a fact. I mean, they allow running any code at compile-time, of course they’re powerful.

C macros, which are at the end of the day nothing more than glorified text substitution rules, allow you to implement new, innovative, modern language constructs, such as:

1

2

3

4

#define ever (;;)

for ever {

...

}

or even:

1

2

3

4

5

6

7

8

9

10

11

12

#include <iostream>

#define System S s;s

#define public

#define static

#define void int

#define main(x) main()

struct F{void println(char* s){std::cout << s << std::endl;}};

struct S{F out;};

public static void main(String[] args) {

System.out.println("Hello World!");

}

But these are just silly examples written for fun. Nobody would ever commit such macro abuse in real-world, production code. Nobody…

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

/* mac.h 4.3 87/10/26 */

/*

* UNIX shell

*

* S. R. Bourne

* Bell Telephone Laboratories

*

*/

...

#define IF if(

#define THEN ){

#define ELSE } else {

#define ELIF } else if (

#define FI ;}

#define BEGIN {

#define END }

#define SWITCH switch(

#define IN ){

#define ENDSW }

#define FOR for(

#define WHILE while(

#define DO ){

#define OD ;}

#define REP do{

#define PER }while(

#undef DONE

#define DONE );

#define LOOP for(;;){

#define POOL }

...

ADDRESS alloc(nbytes)

POS nbytes;

{

REG POS rbytes = round(nbytes+BYTESPERWORD,BYTESPERWORD);

LOOP INT c=0;

REG BLKPTR p = blokp;

REG BLKPTR q;

REP IF !busy(p)

THEN WHILE !busy(q = p->word) DO p->word = q->word OD

IF ADR(q)-ADR(p) >= rbytes

THEN blokp = BLK(ADR(p)+rbytes);

IF q > blokp

THEN blokp->word = p->word;

FI

p->word=BLK(Rcheat(blokp)|BUSY);

return(ADR(p+1));

FI

FI

q = p; p = BLK(Rcheat(p->word)&~BUSY);

PER p>q ORF (c++)==0 DONE

addblok(rbytes);

POOL

}

This bit of code is taken directly from the original Bourne shell code, from a BSD Tahoe 4.3 source code archive. Steve Bourne was an Algol 68 fan, so he tried to make C look more like it.

But Rust macros ain’t like that.

What are Rust macros?

That’s a good question. I’ve got a better one for you:

What are macros?

Macros, at the simplest level, are just things that do other things quicker (for the user) than by manually doing said things.

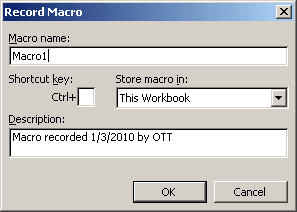

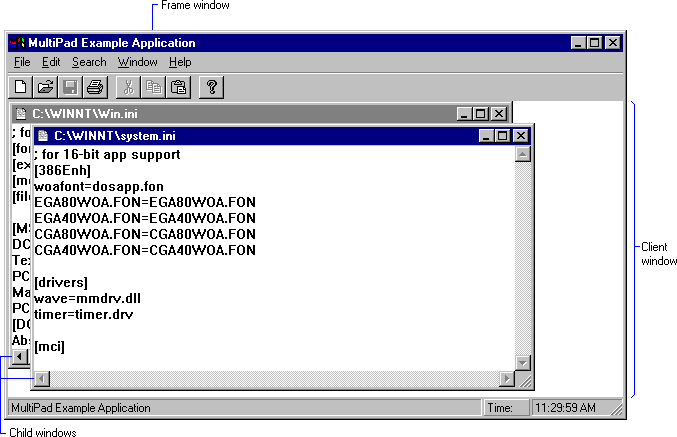

This definition encompasses quite a lot of features you’ve probably already encountered. Of course, if you’re a developer, hearing “macro” often triggers a fight-or-flight response, deeply rooted in a bad experience with C preprocessor macros. If you’re a Microsoft Office power user, “macros” are probably the first thing you’re taught to be afraid of (second only to “moving a picture in a Word document”), thanks in part to the large number of malware that used VBA macros to propagate in the early 2000s. This definition even includes the simple, “key-sequence” macros that a lot of programs allowed you to record and bind to keys.

I use the past tense here, because this trend wore off quite long ago, around the same time we stopped seeing MDI interfaces.

Back on topic. This is about programming, so the macros we’re talking about are the ones we find in programming languages. Even though the C ones are the most famous (because there’s no other language where there are so few built-in constructs that you have to write macros at one point or another), they weren’t the first ones.

A Brief History of Macros

In the early 1950s, if you wanted to write a program for your company’s mainframe computer, your choices were more limited than today, language-wise. “Portable” languages (Fortran, COBOL, eventually Algol) were a new concept, so basically everything serious was written in whatever machine language your computer understood. Of course, you didn’t write the machine language directly, you used what was and is still called an assembler to translate some kind of textual representation into the raw numeric code data you’d then pass on to the mainframe using punch cards or whatnot.

After some time, assemblers started providing ways to declare “shortcuts” for other bits of code. I’ll make a bit of an anachronism here by writing x86 assembly targeting Linux. Let’s say you want to make a simple “Hello World” program:

1

2

3

4

5

6

7

8

9

10

section .text ; text segment (code)

mov eax, 4 ; syscall (4 = write)

mov ebx, 1 ; file number (1 = stdout)

mov ecx, message ; string to write

mov edx, length ; length of string

int 80h ; call kernel

section .data

message db 'Hello World', 0xA ; d(efine) b(yte) for the string, with newline

length equ $ - message ; length is (current position) - (start)

Don’t worry if you don’t fully understand the code above, it’s not the point. Here, writing a string takes 5 whole lines (filling up the 4 parameters and then calling the kernel). Compare this to Python:

1

print("Hello World")

If only there were a way to tell the assembler that those five lines are really one single operation, that we may want to do often…

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

%macro print 2 ; define macro "print" with 2 parameters

mov eax, 4

mov ebx, 1

mov ecx, %1 ; message is first parameter

mov edx, %2 ; length is second parameter

int 80h

%endmacro

section .text

print message1, length1

print message2, length2

section .data

message1 db 'Hello World', 0xA

length1 equ $ - message1

message2 db 'Simple macros', 0xA

length2 equ $ - message2

Here, the “macro” is just performing simple text substitution. When you write print foo, bar, the assembler replaces the line with everything between %macro and %endmacro, all while also replacing every occurrence of %N with the value of the corresponding parameter. This is the most common kind of macro, and is pretty much what you get in C:

1

#define PRINT(message, length) write(STDOUT_FILENO, message, length)

Too good to be true

Obviously, it has limitations. What if, in assembly, you did this:

1

2

3

mov eax, 42 ; just storing something in eax

print foo, bar ; just printing my message

mov ebx, eax ; where's my 42 at?

Unbeknownst to you, the print macro modified the value of the eax register, so the value you wrote isn’t there anymore!

What if, in C, you did this:

1

2

3

4

5

6

7

8

9

10

#define SWAP(a, b) int tmp = a; \

a = b; \

b = tmp;

int main() {

int tmp = 123;

// ...

int x = 5, y = 6;

SWAP(x, y); // ERROR: a variable named 'tmp' already exists

}

Here, there’s a conflict between the lexical scope of the function (main) and the macro (SWAP). Short of using names like __macro_variable_dont_touch_tmp in your macros, there’s not much you can do to entirely prevent problems like this. What about this:

1

2

3

4

5

6

7

8

9

int main() {

int x = 5, y = 6, z = 7;

int test = 100;

if (test > 50)

SWAP(x, y);

else

SWAP(y, z);

}

The above code does not compile. It walks like correct code and quacks like correct code, but here’s what it looks like after macro-expansion:

1

2

3

4

5

6

7

8

9

10

11

12

13

int main() {

int x = 5, y = 6, z = 7;

int test = 100;

if (test > 50)

int tmp = x;

x = y;

y = tmp;

else

int tmp = y;

y = z;

z = tmp;

}

Braceless ifs must contain exactly one statement, but here there are 3 of them! Let’s fix it:

1

2

3

#define SWAP(a, b) {int tmp = a; \

a = b; \

b = tmp;}

Now, it should work, shouldn’t it? Nope, still broken!

1

2

3

4

5

6

7

8

if (test > 50)

{int tmp = x;

x = y;

y = tmp;};

else

{int tmp = y;

y = z;

z = tmp;};

Not seeing it? Let me reformat it for you:

1

2

3

4

5

6

7

8

if (test > 50) {

int tmp = x;

x = y;

y = tmp;

}

;

else

...

Since we’re writing SWAP(x, y); there’s a semicolon hanging right there, after the code block, so the else is not connected to the if anymore. The solution, obviously, is to do:

1

2

3

#define SWAP(a, b) do{int tmp = a; \

a = b; \

b = tmp;}while(0)

Here, the expanded code is equivalent to the one we had before, but requires a semicolon afterwards, so the compiler is happy.

Another simple example is

1

2

3

#define MUL(expr1, expr2) expr1 * expr2

int res = MUL(2 + 3, 4 + 5);

This gets expanded to

1

int res = 2 + 3 * 4 + 5; // bang!

Macros have no knowledge of concepts such as “expressions” or “operator precedence”, so you have to resort to tricks like adding parentheses everywhere:

1

#define MUL(expr1, expr2) ((expr1) * (expr2))

But… it’s broken? There’s no reason anyone should have to do this sort of syntax wizardry just to get a multiline macro or a macro processing expressions to work!

A few years ago, in the 1960s to be precise, some smart guys in a lab realized that “just replace bits of text by other bits of text” was not, bear with me, the best way to do macros. What if, instead of performing modifications on the textual form of the code, the macros could work on an abstract representation of the code, and likewise produce an output in a similar way.

SaaS (Software as an S-expression)

This is a Lisp program (and its output):

1

2

> (print (+ 1 2))

3

Lisp (for LISt Processing) has a funny syntax. In Lisp, things are either atoms or lists. An atom, as its name implies, is something “not made of other things”. Examples include numbers, strings, booleans and symbols. A list, well, it’s a list of things. A list being itself a thing, you can nest lists. This is a list containing various things (don’t try to run it, it’s not a full program):

1

(one ("thing" or) 2 "inside" a list)

You may notice that this looks awfully like the program I wrote earlier; it’s not luck: one of Lisp’s basic tenets is that programs are just data. A function call, that most languages would write f(x, y) can simply be encoded as a list: (f x y).

The technical term for a “thing” (something that is either an atom or a list, with lists written with parentheses and stuff) is s-expression.

When you give Lisp an expression, it tries to evaluate it. A symbol evaluates to the value of the variable with the corresponding name. Other atoms evaluates to themselves. A list is evaluated by looking at its first element, which must be a function, and calling it with the rest of the list as its parameters. You can tell Lisp to not evaluate something, using a function (~technically…~) called quote.

1

2

3

4

> (+ 4 5)

9

> (quote (+ 4 5))

(+ 4 5)

In the end, you get code like this:

1

2

> (print (length (quote (a b c d))))

4

(print... and (length... are evaluated, but (a... is kept as it, because it’s really a list, not a bit of code.

The opposite of quote is called eval:

1

2

3

4

> (quote (+ 4 5))

(+ 4 5)

> (eval (quote (+ 4 5))

9

Through this simple mechanic, Lisp allows you to modify programs dynamically as if they were any other kind of data you can manipulate — because they really are any other kind of data you can manipulate.

Let’s rewrite our MUL macro from before. I’ll define a function which takes two parameters, and returns code that multiply them.

1

2

3

4

> (define (mul expr1 expr2)

(list (quote *) expr1 expr2)) ; * is quoted so it appears verbatim in the output

> (mul (+ 2 3) (+ 4 5))

(* 5 9)

That’s not exactly what I want, since I don’t want the operands to be evaluated right at the beginning, so I’ll quote them:

1

2

3

4

> (mul (quote (+ 2 3)) (quote (+ 4 5)))

(* (+ 2 3) (+ 4 5))

> (eval (mul (quote (+ 2 3)) (quote (+ 4 5))))

45

You’ll notice right away that we don’t have any operator precedence problem like we had in C. But we do have problems: we have to put (quote ...) around every operand to prevent it from being evaluated, and we have to (eval ...) the result to really run the code that was produced. Since these steps are quite common, they were abstracted away in a language builtin called define-macro:

1

2

3

4

> (define-macro (mul expr1 expr2)

(list (quote *) expr1 expr2))

> (mul (+ 2 3) (+ 4 5))

45

Here’s what the SWAP macro would look like:

1

2

3

4

5

(define-macro (swap var1 var2)

(quasiquote

(let ((tmp (unquote var1)))

(set! (unquote var1) (unquote var2))

(set! (unquote var2) tmp)))

I’m using functions I haven’t talked about yet. quasiquote does the same thing as quote, that is, return its argument without evaluating it, except that if you write (unquote ...) somewhere in it, the argument of unquote is inserted evaluated. You don’t have to understand this, only that all of these tools are, in the end, nothing more than syntactic sugar for manipulating lists. I could’ve written swap using only list manipulation functions:

1

2

3

4

(define-macro (swap var1 var2)

(list 'let (list (list 'tmp var1))

(list 'set! var1 var2)

(list 'set! var2 'tmp)))

We could argue that even list is syntactic sugar, and it’s true, the real primitive used to build lists is cons: (list 1 2 3) can be constructed by doing (cons 1 (cons 2 (cons 3 ()))). () is a special value that corresponds to the empty list, it’s often used as an equivalent of null.

set! is just what you use to change a variable’s value.

Quick sidenote, since quote, unquote and quasiquote are very common, there’s even more syntactic sugar in the language to write them more concisely: ' for quote , , for unquote and ` for quasiquote. The code above, in a real codebase, would look like this:

1

2

3

4

(define-macro (swap var1 var2)

`(let ((tmp ,var1))

(set! ,var1 ,var2)

(set! ,var2 tmp)))

That’s cheating, Lisp isn’t a real language anyway

I mean, obviously. Real languages have syntaxes way more complex than lists of things. When you look at a real program written in a real language, for example C, you don’t see a list. You see blocks, declarations, statements, expressions.

1

2

3

4

5

6

7

8

9

10

11

int factorial(int x) // function signature

{ // code block

if (x == 0) // conditional statement

{

return 1; // return statement

}

else

{

return x * factorial(x - 1); // expression, function call

}

}

Well…

1

2

3

4

(define (factorial x)

(if (zero? x)

1

(* x (factorial (- x 1)))))

Or, linearly:

1

(define (factorial x) (if (zero? x) 1 (* x (factorial (- x 1)))))

When a compiler or interpreter reads a program, it does something called parsing. It reads a sequence of characters (letters, digits, punctuation, …) and converts it (this is the non-trivial part) into something it can process more easily.

Think about it, when you read the block of C code above, you don’t read a sequence of letters and symbols. You see that it’s a function declaration, with a return type, a name, a list of parameters and a body. Each parameter has a name and a type, and the body is a code block containing an if-statement, itself containing more code.

A data structure that stores things that are either atomic or made of other things is called a tree. Lisp lists (try saying that out loud quickly) can contain atoms or other lists, they’re just one way of encoding trees.

Here, we’re using a tree to store a program’s source code, which is text, but we know that the code respects a set of rules, called the syntax. Oh, and we abstract away non-essential details like whitespace, parentheses and whatnot.

We might as well call that an Abstract Syntax Tree! (a.k.a. AST)

I’ve omitted some details from the diagram above for the sake of brevity, but you get the idea. Code (text) becomes code (tree), and code (tree) matches more closely the mental idea we have of what code (text) means.

We reach the same conclusion we had we Lisp: code is data. The only difference is that in Lisp, you can really take code and turn it into data, it’s “built-in”, whereas there’s nothing in C for that. The main reason is that code is a weird kind of data. Numbers are simple; arrays, a bit more convoluted but still simple; code is hard to reason about. Trees and stuff. Lisp is built around dynamic lists, so it’s easy. C is built around human suffering, it’s definitely not made for manipulating code. I mean, imagine writing a parser, or even a compiler in C.

Being able to manipulate code from code is called metaprogramming, and few languages have it built-in. Lisp does it, because it’s Lisp. C# does it too, albeit only for a (large enough) subset of the language, with what they call “expression trees”:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

void Swap<T>(Expression<Func<T>> a, Expression<Func<T>> b)

{

var tmp = Expression.Parameter(typeof(T));

var code = Expression.Lambda(

Expression.Block(new [] { tmp }, // T tmp;

Expression.Assign(tmp, a.Body), // tmp = [a];

Expression.Assign(a.Body, b.Body), // [a] = [b];

Expression.Assign(b.Body, tmp))); // [b] = tmp;

var compiled = (Func<T>) code.Compile();

compiled();

}

class Foo

{

public int A;

public int B;

}

var obj = new Foo { A = 123, B = 456 };

Swap(() => obj.A, () => obj.B);

It’s a bit more complicated than in Lisp, because here, the way to achieve what we did with quote (i.e., pass an unevaluated expression to a function) involves declaring a parameter with the Expression<T> type, with T being a function type. This means that you can’t pass any expression directly, you must pass a function returning that expression (hence the () =>). In other words, () => x is the closest C# provides to (quote x).

We don’t have eval either, instead we can compile an expression we built into a real function we can then call (and it’s that call that does what eval would do in Lisp in that context).

Building code is also more complicated: since C# code is not made of lists, you can’t just create a sequence of things and call it a day, code here is stored as objects (“expression trees”) that you build using functions such as Expression.Assign or Expression.Block.

All of this also means that only a subset of the language is available through this feature — you can’t have classes in functions for examples. At the end of the day, it’s not really a problem, most problems solved by macros are solved through other means in C#, and this metaprogramming-like expression tree wizardry is almost only ever used in contexts where only simple expressions will be used.

Long Recaps Considered Harmful

3,000! If you’re still there, you’ve just read 3,000 words of me rambling about old languages and weird compiler theory terminology. This post was supposed to be about Rust macro abuse.

Rust has macros. Twice.

Rust supports two kinds of macros: declarative macros and procedural macros.

Declarative macros are a bit like C macros, in that they can be quite easy to write, although in Rust they are much less error-prone. See for yourself:

1

2

3

4

5

6

7

8

9

10

11

12

13

macro_rules! swap {

($a:expr, $b:expr) => {

let tmp = $a;

$a = $b;

$b = tmp;

};

}

fn main() {

let (mut a, mut b) = (123, 456);

swap!(a, b);

println!("a={} b={}", a, b);

}

1

2

3

4

5

6

7

8

9

macro_rules! mul {

($a:expr, $b:expr) => {

$a * $b

};

}

fn main() {

println!("{}", mul!(2 + 3, 4 + 5)); // no operator precedence issue

}

Declarative macros can operate on various parts of a Rust program, such as expressions, statements, blocks, or even specific token types, such as identifiers or literals. They can also operate on a raw token trees, which allow for interesting code manipulation techniques. But even though they work in a cleaner way and support advanced patterns, with repetitions and optional parameters, they’re just a more advanced form of substitution. So, closer to C macros.

Procedural macros, on the other hand, are more like Lisp macros. They’re written in Rust, get passed a token stream (a list of tokens from the source code) and are expected to give one back to the compiler. Apart from that, they can do basically anything.

They look like this:

1

2

3

4

#[proc_macro]

pub fn macro_name(input: TokenStream) -> TokenStream {

todo!()

}

They’re often used for code generation, for example for generating methods from a struct definition, for example:

1

2

3

4

5

#[derive(Debug)]

struct Person {

name: String,

age: u8

}

This generates an implementation of the Debug trait for the Person type, which will contain code allowing to get a pretty-printed, human-readable version of any Person object when needed. derive(PartialEq) and derive(PartialOrd) generate equality and ordering methods, etc.

But there are less… orthodox uses for procedural macros.

Mara Bos famously wrote some interesting crates — the first one, inline_python, allows running Python code from Rust seamlessly, with bidirectional interaction (for variables):

1

2

3

4

5

6

7

8

9

10

11

use inline_python::python;

fn main() {

let who = "world";

let n = 5;

python! {

for i in range('n):

print(i, "Hello", 'who)

print("Goodbye")

}

}

I highly recommend reading her blogpost series on the subject where she goes deep in detail on how to implement such a macro. It involves lots of subtle tricks needed to deal with how the Rust compiler reads and tokenizes code, how errors can be mapped from Python to Rust, etc.

She also wrote whichever_compiles, which runs multiple instances of the compiler to find… whichever bit of code compiles, among a list you provide:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

use whichever_compiles::whichever_compiles;

fn main() {

whichever_compiles! {

try { thisfunctiondoesntexist(); }

try { invalid syntax 1 2 3 }

try { println!("missing arg: {}"); }

try { println!("hello {}", world()); }

try { 1 + 2 }

}

}

whichever_compiles! {

try { }

try { fn world() {} }

try { fn world() -> &'static str { "world" } }

}

The macros forks the compiler process, at compile-time, and each child process tries one of the branches. The first one to compile wins, and the winning branch is used for the rest of the build process.

After a series of unfortunate events, I was informed of the existence of procedural macros, and decided that I had to make one. After some nights of work, I brought to the world embed-c, the first procedural macro to allow anyone to write pure, unadulterated C code in the middle of a Rust code file. Complete with full interoperability with the Rust code, obviously. This has made a lot of people very angry and been widely regarded as a bad move.

1

2

3

4

5

6

7

8

9

10

11

12

use embed_c::embed_c;

embed_c! {

int add(int x, int y) {

return x + y;

}

}

fn main() {

let x = unsafe { add(1, 2) };

println!("{}", x);

}

It uses a library called C2Rust which, really, is what it sounds like. It’s a toolset that relies on Clang to parse and analyze C code, and generates equivalent (in behavior) Rust code. Obviously, the generated code is not idiomatic, and quickly becomes unreadable if you use enterprise-grade C control flow features such as goto. But can Rust really replace C in the industry without a proper implementation of Duff’s device?

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

embed_c! {

void send(to, from, count)

register short *to, *from;

register count;

{

register n = (count + 7) / 8;

switch (count % 8) {

case 0: do { *to++ = *from++;

case 7: *to++ = *from++;

case 6: *to++ = *from++;

case 5: *to++ = *from++;

case 4: *to++ = *from++;

case 3: *to++ = *from++;

case 2: *to++ = *from++;

case 1: *to++ = *from++;

} while (--n > 0);

}

}

}

fn main() {

let mut source = [1, 2, 3, 4, 5, 6, 7, 8, 9, 10];

let mut dest = [0; 10];

unsafe { send(dest.as_mut_ptr(), source.as_mut_ptr(), 10); };

assert_eq!(source, dest);

}

After seeing Mara’s inline_python crate, I was taken aback by her choice of such an outdated language — Python was created in 1991!

VBScript, first released in 1996, is a much more modern language than Python. It provides transparent COM interoperability, is supported out-of-the-box on every desktop version of Windows since 98 — even Windows CE on ARM is supported; and it has been since 2000, whereas Python won’t run on Windows ARM until 3.11 (2022).

As such, I had no other choice but to create inline_vbs, for all your daily VBS needs.

1

2

3

4

5

6

7

8

9

use inline_vbs::*;

fn main() {

vbs![On Error Resume Next]; // tired of handling errors?

vbs![MsgBox "Hello, world!"];

if let Ok(Variant::String(str)) = vbs_!["VBScript" & " Rocks!"] {

println!("{}", str);

}

}

It relies on the Active Scripting APIs, that were originally designed to allow vendors to add scripting support to their software, and it’s actually a nice idea. You can have multiple languages providers, and a program relying on the AS APIs would automatically support all installed languages. The most common were JScript and VBScript, because they were installed by default on Windows, but you could add support for Perl, REXX or even Haskell. Haskell! Think about it. This means that on a computer with the Haskell provider installed, this bit of code would be valid and would kinda work in Internet Explorer:

1

2

3

4

5

6

7

8

9

10

11

12

<HTML>

<HEAD>

<TITLE>Active Scripting demo</TITLE>

</HEAD>

<BODY>

<H1>Welcome!</H1>

<SCRIPT LANGUAGE="HASKELL">

main :: IO ()

main = putStrLn "Hello World"

</SCRIPT>

</BODY>

</HTML>

One major pain point is that VBScript, like Python, is a dynamic language, where values can change type, something that statically-typed languages like Rust are proud to say they do not like at all, thank you very much.

Since VBScript is handled through COM APIs, values are transferred using the VARIANT COM type, which is pretty much a giant union of every COM type under the sun. Luckily, this matches up perfectly with Rust’s discriminated unions — I take it as a sign from the universe that Rust and VBScript were made to work together.

That’s pretty much it for today.